Lumina is the instant Core ML video framework you're looking for

Want Core ML? Want video? Lumina puts the two together.

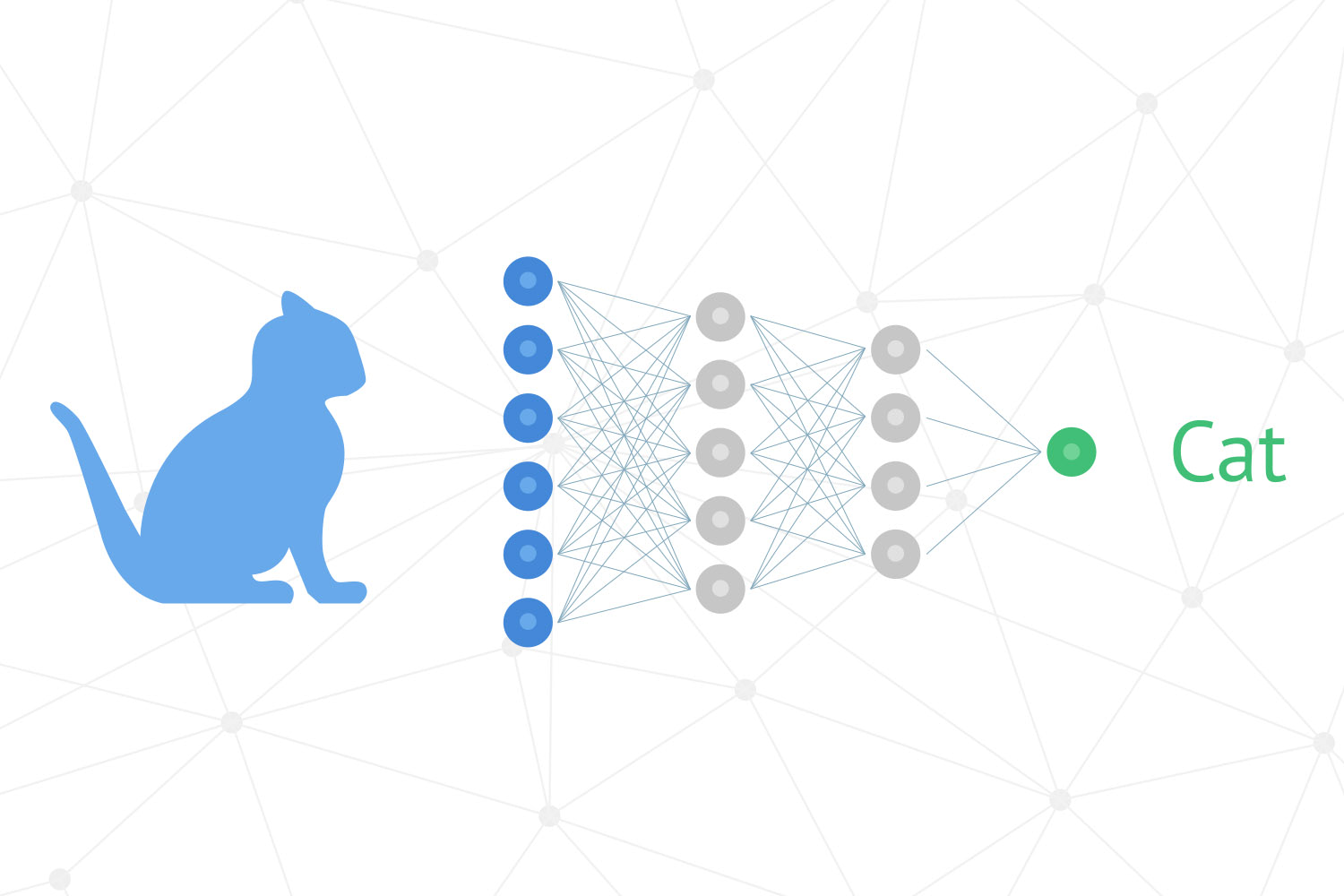

The Core ML and Vision frameworks are two of the most impressively powerful additions in iOS 11, but they are also two of the most complex – there's a big learning curve to get even basic applications working, and that can be off-putting for newcomers. While one option is to buy a good book (gratuitous plug), another is just to dive in with some code, and that's where David Okun's new Lumina framework comes in.

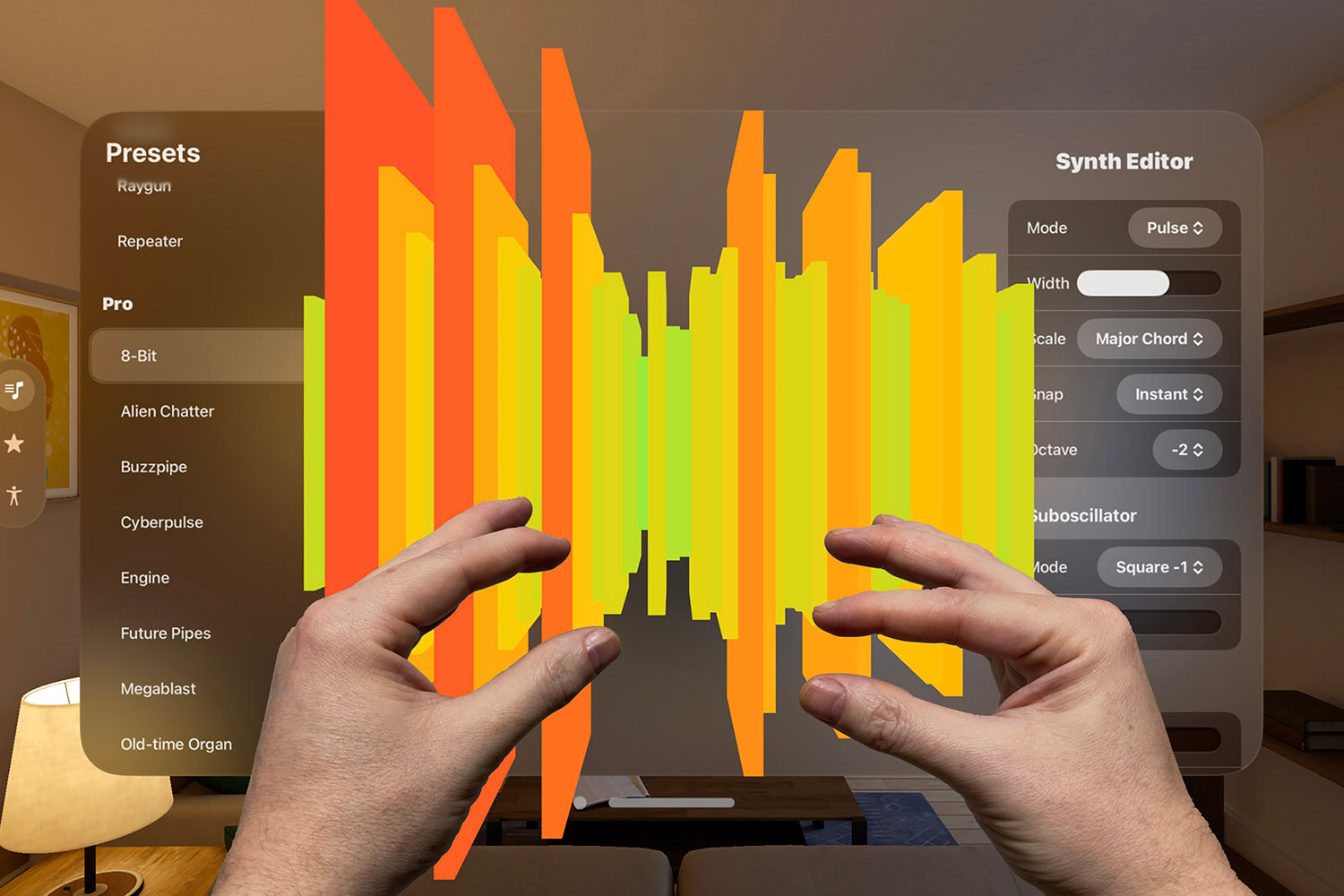

Lumina is like hitting hyperspace for your ML idea: it gets rid of all the AVFoundation heavy lifting that's required to get off the ground, replaces tricky image formats like CVImageBuffer with standard types like UIImage, and provides recognition for common data types such as QR codes, barcodes, and faces. But where Lumina goes from "neat" to "awesome" is that it comes with Core ML built in for devices running iOS 11 or later: provide it with any Core ML-compatible model and have it stream predictions directly to your delegate as they come in from the camera.

All the magic is done using a single LuminaViewController that you configure and present. Should it stream frames or deliver them on completion? Which camera do you want to use? Do you need maximum quality or can you sacrifice quality for speed? Should the user be allowed to zoom, and by how much? And of course the important one: which Core ML model should it use for predicting the contents of the camera feed?

You can do all this yourself – of course you can – but why would you when Lumina does all the hard work for you? By removing most if not all of the barriers from entry into the world of Core ML and Vision apps, I'm hopeful projects like Lumina will open this fascinating new area of development to more people.

Link: Lumina.

SPONSORED Still waiting on your CI build? Speed it up ~3x with Blaze - change one line, pay less, keep your existing GitHub workflows. First 25 HWS readers to use code HACKING at checkout get 50% off the first year. Try it now for free!

Sponsor Hacking with Swift and reach the world's largest Swift community!