What’s new in iOS 13?

All the major iOS developer and API changes announced at WWDC19

iOS 13 introduces a vast collection of changes for developers: new APIs, new frameworks, new UI changes, and more. Oh, and Marzipan! Or should that be Project Catalyst now? And dark mode! And iPadOS! And, and, and…!

Rather than try to sum up everything that’s changed in one article, instead this article is here as a jumping-off point into many smaller articles – I’m writing individual mini-tutorials about specific changes, publishing them on a rolling basis and adding links here.

Let’s start with the big stuff…

BUILD THE ULTIMATE PORTFOLIO APP Most Swift tutorials help you solve one specific problem, but in my Ultimate Portfolio App series I show you how to get all the best practices into a single app: architecture, testing, performance, accessibility, localization, project organization, and so much more, all while building a SwiftUI app that works on iOS, macOS and watchOS.

Sponsor Hacking with Swift and reach the world's largest Swift community!

SwiftUI: a new way of designing apps

Xcode 11 introduced a new way of designing the user interface for our apps, known as SwiftUI. For a long time we’ve had to choose between the benefits of seeing our UI in a storyboard or having a more maintainable option with programmatic UI.

SwiftUI solves this dilemma once and for all by providing a split-screen experience that converts Swift code into a visual preview, and vice versa – make sure you have macOS 10.15 installed to try that feature out.,

See my full article Swift UI lets us build declarative user interfaces in Swift for more information.

- If you'd like to learn SwiftUI, you should read either my online book SwiftUI By Example or follow my 100 Days of SwiftUI course, both of which are free.

UIKit: Dark mode, macOS, and more

At WWDC18 Apple announced a preview of a new technology designed to make it easy to port iOS apps to macOS. This technology – previously known to us by the name “Marzipan” but now Project Catalyst – turns out to mostly be powered by a single checkbox in Xcode that adds macOS as a target for iOS apps, which is helpful because it means it doesn’t take much for most of us to get started.

Note: Shipping iOS apps on macOS using Project Catalyst requires macOS 10.15.

There are, inevitably, a handful of tweaks required to make your app work better on the desktop – you should add code to detect Catalyst as needed.

Also, iOS 13 brings us a system-wide dark mode, which has been requested for as many years as I can remember. Although most apps pretty much work out of the box, there’s some extra work to do to make sure your artwork looks good on both backgrounds, and if you’ve used custom colors then you should provide light and dark alternatives using the asset catalog Appearances box.

Tip: If you want to detect whether dark mode is enabled, see my article How to detect dark mode in iOS.

Prior to iOS 13, iOS developers used specific built-in colors such UIColor.red or .blue, colors they created themselves such as UIColor(red: 0.1, green: 0.5, blue: 1, alpha: 1), and named colors from their asset catalog. However, with the introduction of dark mode in iOS 13 we need to be more careful: simply colors like .red or .blue might look good in one appearance but not the other, and using specific colors suffers from the same issue.

UIColor in iOS 13 helps a lot here by adding new semantic colors that automatically adapt based on the user’s trait environment. For more information, see my article How to use semantic colors to help your iOS app adapt to dark mode

Once thing that didn’t quite so much attention is that UIKit has seen some small design refreshes – the extreme flatness of iOS 7 is being rolled back into a beautiful middle ground reminiscent of the WWDC18 logo. For example, UISegmentedControl now has a gentle 3D effect that lifts it off the background rather than just being flat blue lines; it’s small, but most welcome.

Plus – and I admit it’s hard to believe it took 13 iOS versions for this to arrive – UIImage has seen a dramatic expansion with the addition of many (many) graphics that represent common system glyphs such as back and forward chevrons. These have been built into macOS for as long as I can remember, but iOS only ever shipped with a handful of system icons – until now.

To access the new symbols, use the new UIImage(systemName:) initializer. This takes a string for the icon name you want to load, presumably because the list of possibilities goes well past 1500 – it would require a huge number of symbols otherwise. So, Apple made a dedicated macOS tool for browsing them all, called SF Symbols – download it here

I’ve written an article showing how to create icons at custom weights and to match custom Dynamic Type fonts here: How to use system icons in your app. You have to admit: the ability to pull in over 1500 glyphs across a number of weights is quite remarkable.

Multi-window support is happening

Next time you create a new Xcode project you’ll see your AppDelegate has split in two: AppDelegate.swift and SceneDelegate.swift. This is a result of the new multi-window support that landed with iPadOS, and effectively splits the work of the app delegate in two.

From iOS 13 onwards, your app delegate should:

- Set up any data that you need for the duration of the app.

- Respond to any events that focus on the app, such as a file being shared with you.

- Register for external services, such as push notifications.

- Configure your initial scenes.

In contrast, scene delegates are there to handle one instance of your app’s user interface. So, if the user has created two windows showing your app, you have two scenes, both backed by the same app delegate.

Keep in mind that these scenes are designed to work independently from each other. So, your application no longer moves to the background, but instead individual scenes do – the user might move one to the background while keeping another open.

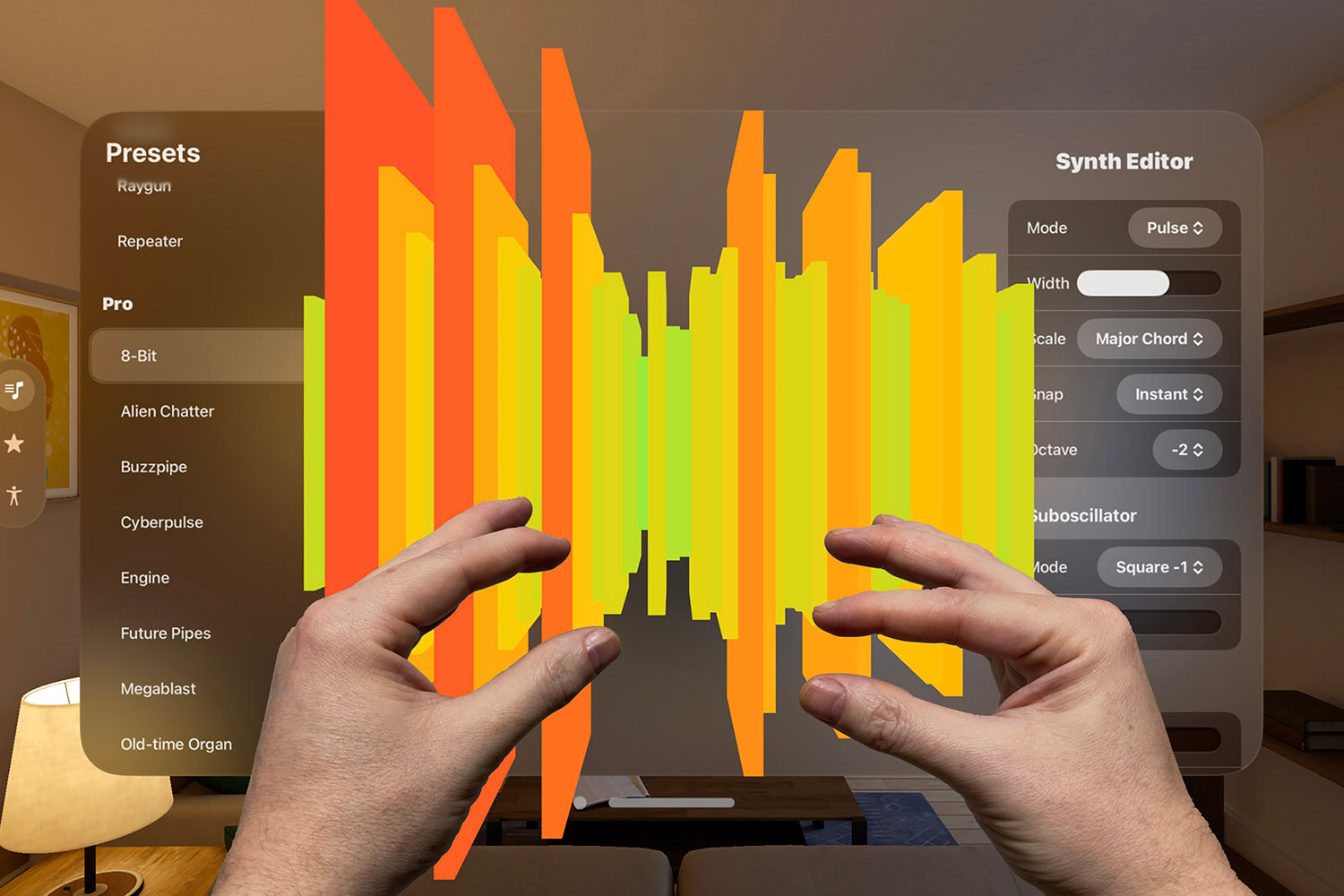

Haptics are now open for everyone to customize!

iOS 13 introduces a new Core Haptics framework that lets us control precisely how the Taptic Engine generates vibrations. Think of the DualShock system from PS4 mixed in with the HD Rumble from Nintendo Switch and you’re part-way there, but Core Haptics gives us significantly more fine-grained control over how vibrations work and – critically – how they evolve over time.

This means you can fire up an instance of CHHapticEngine when your app needs to start generating haptics, then creating transient effects (a single tap) or continuous effects (a buzz), all with extraordinary control over the intensity and feel (“sharpness”) of the effect. Even more impressively, the new haptic system can be given sounds to play alongside your haptics, and it will ensure the two are synchronized.

Now, I know all this sounds impressive, but there are a few provisos:

- From what I can see you need to write some pretty significant JSON uses Apple’s AHAP format (Apple Haptic and Audio Pattern) in order to create more complex effects. I suspect you might make a few simple effects, but it would be great if there were an open-source collection of effects we could share.

- You can create haptics in code if you want to, and you have the same range of controls you’d have using AHAP.

- Yes, that means you can also create audio haptics in code, specifying likes like pan, pitch, volume, attack, decay, and more – it’s remarkably powerful.

- Core Haptics won’t magically scan your audio to figure out what rhythm to use in the haptics – you need to timestamps for each individual tap. Core Haptics will ensure those audio timestamps match up to your haptic timestamps so they stay synchronized.

- There are some high power costs associated with keeping the Taptic Engine active, so you can opt in to an automatic power down when haptic effects haven’t been used for a while – set

isAutoShutdownEnabledto true on yourCHHapticEngine.

I wrote an article to help you get started with Core Haptics: How to play custom vibrations using Core Haptics

Vision gets a massive upgrade for machine learning

Apple’s Vision framework has always been had some ML behind it, but with things like the Neural Engine capable of 600 billion operations a second on iPhone X and an eye-watering 5 trillion operations a second on iPhone XS, we’re now seeing frameworks from Apple upgraded to perform significantly more intensive work.

So, Vision has been upgraded in several key ways:

- It can now perform optical character recognition (OCR) on documents, either in a fast mode (which is very fast) or an accurate mode, which performs all sorts of extra ML calculation to try to get the highest accuracy possible. Although this appears to be English only for now, we can provide a custom dictionary to Vision to help it with accuracy for unusual or custom terms.

- It can detect the saliency (read: importance) of an object in a scene, either using object-based scanning (what’s in the foreground vs what’s in the background), or attention-based (where your eyes are most likely to go in a scene.) I can’t imagine how much ML training went into making this happen, but it works – presumably it gives us much the same functionality as Photos uses to zoom in on various parts of pictures?

- There’s a new capture quality system that lets us identify how good a photo of someone is based on things like how centered they are.

I’ve put together some example code to help you get started with detecting text: How to use VNRecognizeTextRequest’s optical character recognition to detect text in an image.

CryptoKit gives us easy hashing, encryption, and more

The CommonCrypto framework has always been the go-to for performing fast cryptography, but it was hard to love – cryptography is rarely easy, and sometimes it felt like CommonCrypto really wanted you to feel how much work was happening behind the scenes.

This has all changed in iOS 13 thanks to a new Swift-only framework called CryptoKit, which provides lots of helpful wrappers around tasks like hashing, encryption, and public key cryptography.

If you want to get started, I’ve written an article here: How to calculate the SHA hash of a String or Data instance.

VisionKit lets us scan documents quickly

Apple’s Notes app has a neat document scanning system built in, and now that same functionality is available to us in a new micro-framework called VisionKit. Its sole job right now is to present a dedicated view controller for document scanning then reading back what it found.

I’ve written some example code here: How to detect documents using VNDocumentCameraViewController.

Core Image has another clean up

Slowly, slowly Core Image is being dragged into the Swift century. Late last year you might have noticed that the entire Core Image documentation was moved to Apple’s “archive” documentation area, which means it’s not updated and may go away. Now, though, it looks like Apple has taken the first step towards making Core Image much safer with actual compiler-enforced types rather than strings.

I say it’s a first step because a) their document is almost entirely “No overview available”, b) you specifically need to import CoreImage.CIFilterBuiltins in order to get them.

And there's more!

iOS 13 gave us a variety of small but important improvements, including…

- How to let users choose a font with UIFontPickerViewController

- How to show a relative date and time using RelativeDateTimeFormatter

- How to use dependency injection with storyboards

- How to perform sentiment analysis on a string using NLTagger

What are your favorite new features in iOS 13? Tweet me at @twostraws and let me know!

TAKE YOUR SKILLS TO THE NEXT LEVEL If you like Hacking with Swift, you'll love Hacking with Swift+ – it's my premium service where you can learn advanced Swift and SwiftUI, functional programming, algorithms, and more. Plus it comes with stacks of benefits, including monthly live streams, downloadable projects, a 20% discount on all books, and free gifts!

Sponsor Hacking with Swift and reach the world's largest Swift community!