Special Effects with SwiftUI

TimelineView, Canvas, particles, and… AirPods?!

Everyone knows SwiftUI does a great job of creating standard system layouts with lists, buttons, navigation views, and more, and while it’s really useful I doubt most people would call it fun.

So, in this article I’m going to show you how to use SwiftUI to build something fun, beautiful, and unlike anything you’ve seen before. You’ll need at least Xcode 13 and iOS 15 or later, but you also need to download a single image from my site from here: https://hws.dev/spark.zip.

BUILD THE ULTIMATE PORTFOLIO APP Most Swift tutorials help you solve one specific problem, but in my Ultimate Portfolio App series I show you how to get all the best practices into a single app: architecture, testing, performance, accessibility, localization, project organization, and so much more, all while building a SwiftUI app that works on iOS, macOS and watchOS.

Sponsor Hacking with Swift and reach the world's largest Swift community!

Getting started

At the core of our little experiment is a particle system, which is commonly used in games to create effects like fire, smoke, rain, and more. We’re going to start simple and work our way up – it’s pretty amazing how fast we can move with SwiftUI.

The first step is easy: create a new iOS project using the App template, making sure to choose SwiftUI for your interface. You should already have downloaded the spark.zip file from my site, which contains a single image. I’d like you to drag that into your project’s asset catalog, so we have an image to use for all our particles.

Next, I’d like to think about what it means to store one particle in a particle system – what does one rain drop need to store, or one snowflake? There are all sorts of values we could store, but we need only three: the X and Y coordinates for the particle, plus the date it was created.

In addition to that, I’m also going to add a conformance to the Hashable protocol, so we can add our particles to a set and remove them easily.

Create a new Swift file called Particle.swift, and give it this code:

struct Particle: Hashable {

let x: Double

let y: Double

let creationDate = Date.now.timeIntervalSinceReferenceDate

}So, that stores a single particle in our particle system – that’s one drop of rain, one spark from a fire, one piece of fairy dust, or whatever kind of particles you’re working with.

One level up from that is the particle system itself, which needs three pieces of information:

- The image it should render.

- A sequence containing all the particles that are active right now.

- Where it should be placed in our UI.

The first two of those are straightforward, but the third needs to be treated carefully: we don’t want to have to hard-code values such as the width or height of our system, because it wouldn’t scale well across various device sizes. So, instead we’re going to use the same system SwiftUI uses for things like anchors and gradient positions: the UnitPoint type, which stores its values from 0 through 1 for both X and Y coordinates.

Create another new Swift file called ParticleSystem.swift, and give it this code:

class ParticleSystem {

let image = Image("spark")

var particles = Set<Particle>()

var center = UnitPoint.center

}To bring that to life, we need a way to update our particles – to remove any that are old, and create new ones regularly. For now this will just be as simple as creating a new particle whenever a function is called, but we’ll add to it later on.

Add this to the ParticleSystem class now:

func update(date: TimeInterval) {

let newParticle = Particle(x: center.x, y: center.y)

particles.insert(newParticle)

}The final part of our initial step is to write some SwiftUI code to render our particles. This can be done really efficiently thanks to SwiftUI’s TimelineView and Canvas: the former lets us render content on a frequent interval, and the latter lets us render text, images, shapes, and more.

This combination is perfect for our purposes because it lets us render the particle image again and again – once for every particle – and so is extremely efficient.

So, we can start by adding a property to ContentView that will store our particle system:

@State private var particleSystem = ParticleSystem()Yes, that uses @State even though ParticleSystem is a class – it effectively acts as a cache for the reference type, without triggering changes when any part of the class changes.

Now we can fill in the basics of our view’s body: we’re going to create a TimelineView on an animation schedule, which will give us smooth movement, then place inside that a Canvas so we can do our custom particle drawing. The initializers for both of these types pass in values for us to use: how fast the timeline updates and what its current time is, and a drawing context and size respectively.

Start by replacing your existing ContentView body with this:

TimelineView(.animation) { timeline in

Canvas { context, size in

// drawing code here

}

}

.ignoresSafeArea()

.background(.black)I’ve made that have a black, edge to edge background so our particles stand out nice and clearly.

Inside the drawing code we need to do two things:

- Call our particle system’s

update()method with the current date as aTimeInterval. This isn’t used right now, but is important shortly. - Draw each particle. Remember, particle positions are stored as X/Y values between 0 and 1, where 0 is the left or top edge, and 1 is the right or bottom edge. So, we can multiple each particle’s position by the size of our canvas to get the actual drawing position.

Replace the // drawing code here comment with this:

let timelineDate = timeline.date.timeIntervalSinceReferenceDate

particleSystem.update(date: timelineDate)

for particle in particleSystem.particles {

let xPos = particle.x * size.width

let yPos = particle.y * size.height

context.draw(particleSystem.image, at: CGPoint(x: xPos, y: yPos))

}That’s enough to make our program run, so give it a try! If everything has gone to plan, you should see a white circle in the center and not much else. That’s hardly impressive by any standard, particularly given I said we could move fast with SwiftUI.

Going up a gear

Most of our work so far was creating models for our particles and particle system types – we haven’t actually written much SwiftUI code just yet.

In fact, it takes just one modifier on TimelineView to start to bring this whole thing to life. It’s going to be a bit of a shortcut because really it’s just a starting point so you can see what our code actually does, but that’s okay – we’ll replace it soon enough.

Add this modifier to the TimelineView now, before the other two modifiers:

.gesture(

DragGesture(minimumDistance: 0)

.onChanged { drag in

particleSystem.center.x = drag.location.x / UIScreen.main.bounds.width

particleSystem.center.y = drag.location.y / UIScreen.main.bounds.height

}

)That tells SwiftUI every time the user moves their finger, we should update the X/Y coordinate of our particle system to match their finger’s location.

Notice how we divide the touch location by the width and height of the user’s screen? This matters, because the touch location will be absolute X/Y coordinates, whereas we want values between 0 and 1.

Go ahead and run the app again and you’ll see it’s a lot better – you can drag your finger around to draw right onto the screen. It’s neat, but we can do so much better!

For example, we could make older lines fade away after 1 second by adjusting the opacity of our drawing context. Put this before the call to context.draw():

context.opacity = 1 - (timelineDate - particle.creationDate)That subtracts the particle’s creation date from our timeline date, which will tell us how old the particle is. If we then subtract that age from 1, we’ll make older particles fade away. For example, particles that were just made will have an age of 0, so will have an opacity of 1 - 0 or just 1.

If you run it again you’ll see the result is looking a lot better already – as you drag your finger around it’s like you’re drawing with light on the screen.

But we can do even better! With another small change in our drawing code we can tell SwiftUI to blend our graphics together so that overlapping particles get brighter and brighter, as if they were merging together.

Add this just after the call to particleSystem.update():

context.blendMode = .plusLighterThis looks particularly effective when we add some color to the particles, which is another one-liner in SwiftUI. Add this after the previous code:

context.addFilter(.colorMultiply(.green))Much better!

Our code looks great so far, but before we move on there’s one important change we need to make: although we’re making old particles fade out, we aren’t actually destroying them. That means SwiftUI is drawing thousands of invisible particles, which will chew up a lot of RAM and CPU time.

The fix here is to upgrade the update() method so that it removes old particles once they are over a second old. We can do that by subtracting 1 from whatever date was passed in, looping over all the particles to see which ones were created before that date, and remove any that are too old.

Put this at the start of the update() method:

let deathDate = date - 1

for particle in particles {

if particle.creationDate < deathDate {

particles.remove(particle)

}

}And now our little particle system is done!

Time for rainbows

When I showed my code to my daughter, she said “it’s nice, but can you make it do rainbows…?” Of course we can! In fact, it’s only really a small adjustment from our current code – rather than giving the entire particle system a uniform color, we need to give each particle its own color, moving across the color spectrum.

This takes all of four steps.

First, we need to add a property to the Particle struct so that particles have their own color:

let hue: DoubleSecond, we need to add another hue property, this time to the ParticleSystem class, so that we can track the current hue being used to generate particles – this allows us to move smoothly through the rainbow:

var hue = 0.0Third, inside update() we need to create our new particles using the current hue, then adjust it upwards a little. Hues run the range from 0 through 1, so once we go beyond that range we’ll subtract 1 to return to the start of the start of the spectrum.

Replace the existing new particle code with this:

let newParticle = Particle(x: center.x, y: center.y, hue: hue)

particles.insert(newParticle)

hue += 0.01

if hue > 1 { hue -= 1 }And finally, we need to delete the existing call to addFilter(), because that adds a single color. Instead, we need to add a unique filter for each particle, which raises an interesting problem: we’re using context.addFilter() right now, but how do we remove that filter to add a different one?

The answer is that we can’t: once a filter is added it can’t be removed. Fortunately, SwiftUI’s Canvas uses value semantics, which means we can take a copy of our context, and any modifications to that context won’t affect the original context – we can safely apply a filter only once, rather than having it affect all particles.

So, replace your existing drawing code with this:

var contextCopy = context

contextCopy.addFilter(.colorMultiply(Color(hue: particle.hue, saturation: 1, brightness: 1)))

contextCopy.opacity = 1 - (timelineDate - particle.creationDate)

contextCopy.draw(particleSystem.image, at: CGPoint(x: xPos, y: yPos))That creates a color based on the particle’s current hue, but applies it only to the context copy so that it won’t affect future particles – nice!

One last tweak

Before we take a surprising turn in a very different direction, I want to add one more fun tweak to our drawing: rather than just drawing each particle once, we can instead draw it four times at various positions, creating a symmetry effect for our graphics. Again, this takes very little work, and looks fantastic!

First, we’re going to add a property to ContentView that holds an array of flip data – whether to flip horizontally or vertically. We’ll have four elements in here, to draw four quadrants on our canvas. Add this property now:

let options: [(flipX: Bool, flipY: Bool)] = [

(false, false),

(true, false),

(false, true),

(true, true)

]Now we need to adjust our particle-drawing loop again. To avoid doing extra work, we can copy the context, add the color filter, and adjust the opacity once per particle, but then loop over the options array to flip the X and Y positions as needed.

Replace your particle loop with this:

for particle in particleSystem.particles {

var contextCopy = context

contextCopy.addFilter(.colorMultiply(Color(hue: particle.hue, saturation: 1, brightness: 1)))

contextCopy.opacity = 1 - (timelineDate - particle.creationDate)

for option in options {

var xPos = particle.x * size.width

var yPos = particle.y * size.height

if option.flipX {

xPos = size.width - xPos

}

if option.flipY {

yPos = size.height - yPos

}

contextCopy.draw(particleSystem.image, at: CGPoint(x: xPos, y: yPos))

}

}Okay, that’s enough futzing around with Canvas – I hope you’ll agree it’s lots of fun! But we’re not done yet…

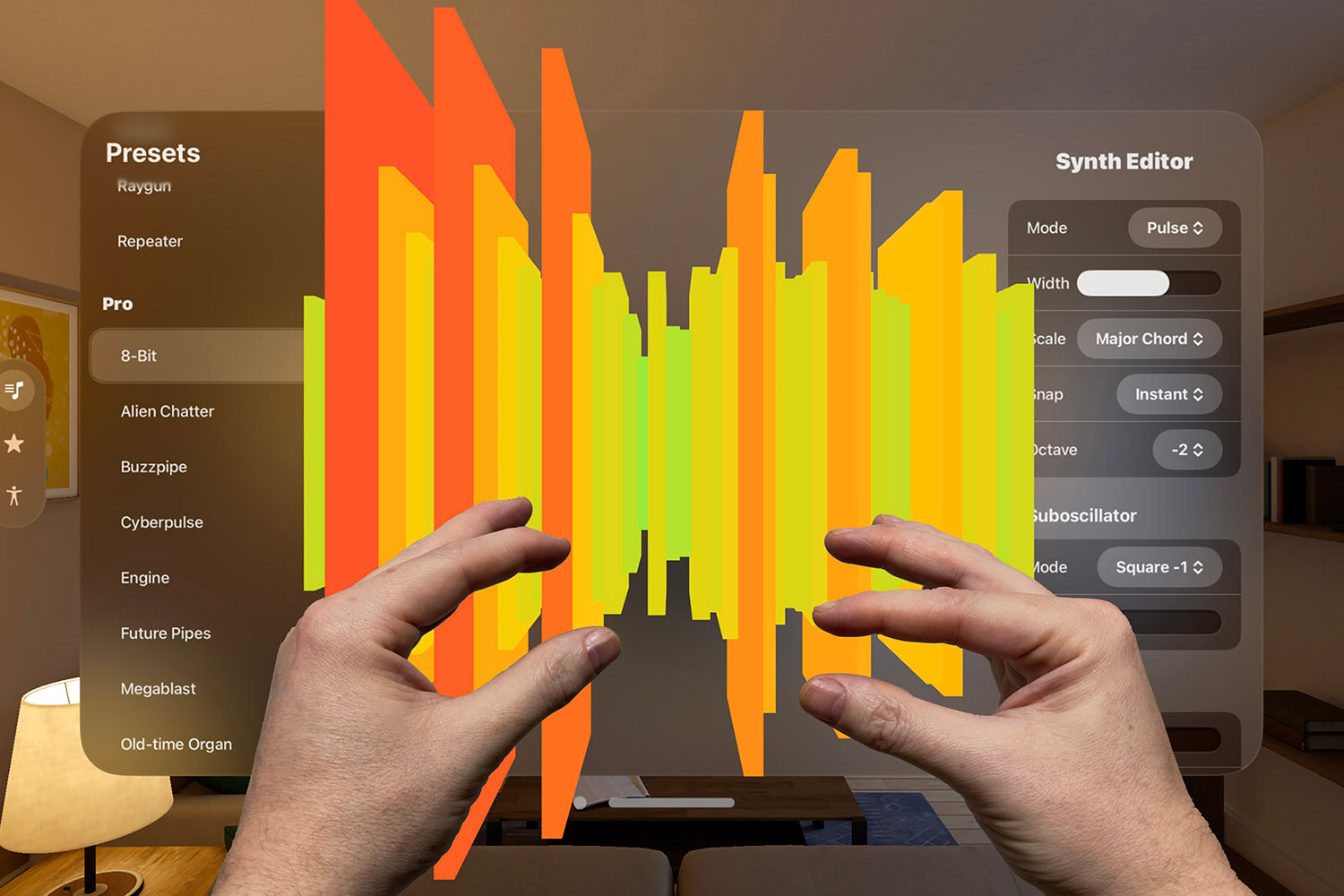

Who needs hands, anyway?

So far this is all pretty standard stuff, but it’s time to take this little experiment in a very different direction. Right now we have a little hack in place that lets us adjust the particle locations using a finger, but that’s so dull – we can make something much more interesting thanks to Core Motion!

Core Motion provides a brilliantly simple API for reading physical device orientation, all using CMMotionManager. We can ask this thing to start delivering motion updates, and pass it a closure to use when new motion data is available. This should not be run on the main queue because motion data can be delivered so fast it might slow down to your UI.

We don’t want to pollute our SwiftUI code with any Core Motion work, so we can wrap it all up in a custom class. To do that, create a new Swift file called MotionManager.swift, replace its Foundation import with CoreMotion, then give it this code:

class MotionManager {

private var motionManager = CMMotionManager()

var pitch = 0.0

var roll = 0.0

var yaw = 0.0

}That sets default values for all three pieces of motion data we care about, but obviously as soon as the MotionManager class is created we want to start looking for real data from Core Motion. This can be done by adding an initializer to the class, which will call startDeviceMotionUpdates(), like this:

init() {

motionManager.startDeviceMotionUpdates(to: OperationQueue()) { [weak self] motion, error in

guard let self = self, let motion = motion else { return }

self.pitch = motion.attitude.pitch

self.roll = motion.attitude.roll

self.yaw = motion.attitude.yaw

}

}Now that we start reading updates, we also need to stop reading updates when this class is destroyed, so add this too:

deinit {

motionManager.stopDeviceMotionUpdates()

}That’s our new class done!

Back in ContentView we can put it into action straight away by adding a property to create and store a MotionManager object:

@State private var motionHandler = MotionManager()And now we just need to update the particle system’s center value based on the motion handler data – put this after the call to particleSystem.update() in your drawing code:

particleSystem.center = UnitPoint(x: 0.5 + motionHandler.roll, y: 0.5 + motionHandler.pitch)If you run the app again you’ll see we can control the movement now just by tilting the phone.

That’s neat, but our story isn’t done just yet. You see, having to move your hand around all that time can be quite tiring, because modern iPhones are just so darn heavy. How about we make the movement happen without having to move our hand at all?

To do that, go to the Info tab for your target, then add a new key called “Privacy - Motion Usage Description”. Give it the value “We need to read your movements.”

What did that change? Well, nothing at all – yet. But now we can make one tiny change to the MotionManager class to do something quite remarkable. Find this line:

private var motionManager = CMMotionManager()And replace it with this:

private var motionManager = CMHeadphoneMotionManager()I’ve been wearing AirPods the entire time I was working, and now you know why: when we run the app now iOS will show a permission prompt asking the user if the app can read their motion data, and if they approve it then I can control the whole thing just be tilting my head!

Where next?

You’ve seen how we can use TimelineView and Canvas to create beautiful effects, how we can layer on improvements such as coloring, blend modes, symmetry and more, then tie the whole thing into the accelerometers and even AirPods to create something quite remarkable.

But even then, we’ve only just scratched the surface of particle systems here – there is so much more they can do, and of course SwiftUI is more than capable of delivering it all. I actually made a two-hour livestream going into all sorts of detail about particles, making an app where you can customize every aspect of the particle system using an interactive UI. Visit https://bit.ly/swiftui-particles to find out more.

Anyway, I promised you something fun, beautiful, and unlike anything you’ve seen before, and hopefully you’ll agree it was worth the time – I can’t think the last time I saw anyone use AirPods to draw rainbow lights on their phone!

TAKE YOUR SKILLS TO THE NEXT LEVEL If you like Hacking with Swift, you'll love Hacking with Swift+ – it's my premium service where you can learn advanced Swift and SwiftUI, functional programming, algorithms, and more. Plus it comes with stacks of benefits, including monthly live streams, downloadable projects, a 20% discount on all books, and free gifts!

Sponsor Hacking with Swift and reach the world's largest Swift community!